# Fast CASPaxos

Table of Contents

Introduction

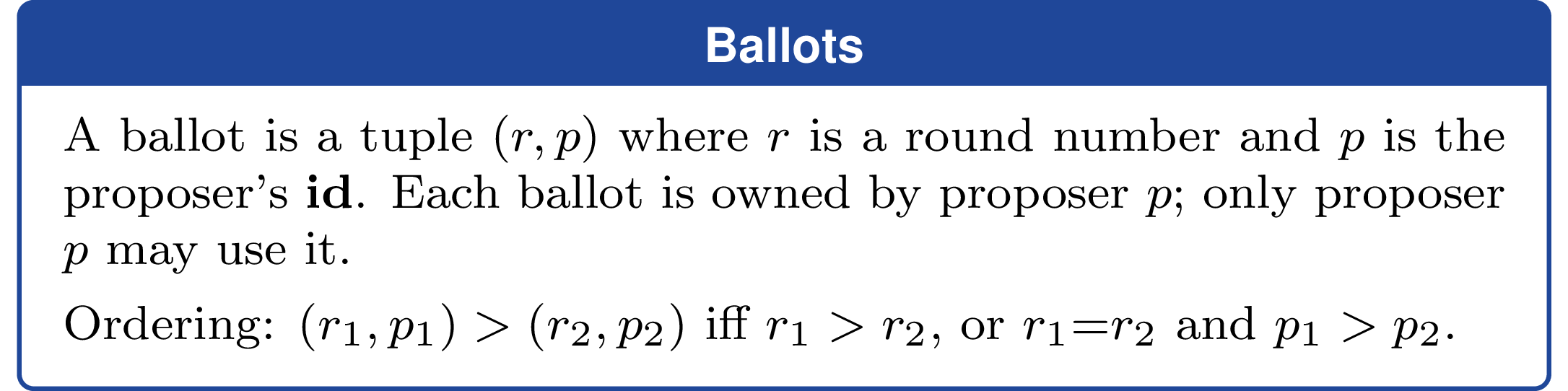

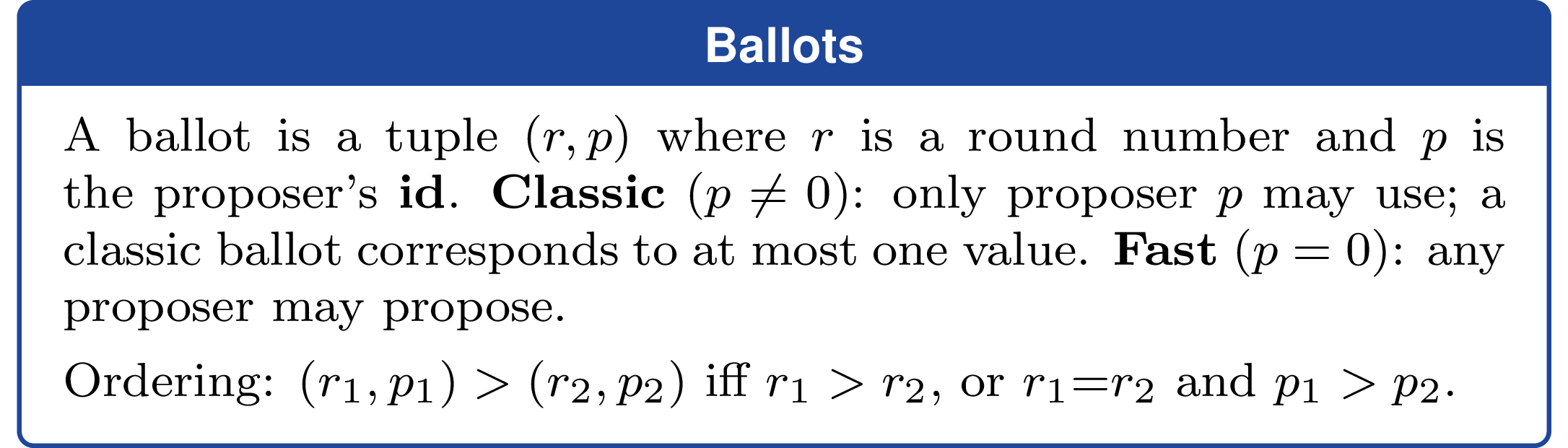

Classic Paxos (aka “single-decree Paxos”) lets a group of servers agree on a single, immutable value. It divides its responsibilities into two main roles: proposers, which initiate rounds and choose candidate values, and acceptors, which persist promises and accepted values. In most implementations, these roles are bundled together into every server, but it’s common to talk about them as though they are separate processes and we won’t deviate from the norm in this post.

CASPaxos extends Paxos into a rewritable register, so proposers can mutate the value over time while maintaining strong consistency guarantees (linearizability) so that no acknowledged write is ever clobbered. Classic Paxos requires two messaging round-trips to decide on a value. That is, it operates in 2 phases:

- a

preparephase where a proposer tries to become leader and learns the current state from acceptors, and - an

acceptphase where that proposer asks acceptors to accept a value.

A common Paxos optimization is to have each accept message piggyback the next prepare message so the same proposer can update the register via a single accept+prepare message instead of needing two separate round-trips.

My contribution is Fast CASPaxos, which lets any proposer update the register in a single accept+prepare request, not only the proposer that already holds leadership. It works by leveraging an insight from Fast Paxos. After coming up with Fast CASPaxos and going through all of the work to propagandize myself about it through deterministic simulation testing, TLA+ models, showers, etc, I discovered that key ideas were already discovered by Heidi Howard and presented in her thesis titled Distributed consensus revised. Still, Fast CASPaxos brings together those ideas and the core idea from CASPaxos in a way that I believe is novel and it identifies one point along the Pareto frontier of optimal distributed consensus algorithms. An interesting side-point about Fast CASPaxos is that it’s cheap to switch between leadered and leaderless consensus at runtime, so theoretically you could create an adaptive algorithm that lets you decide based on observed conditions (conflict rates, relative latency, available servers, etc).

The rest of the post covers leadered-vs-leaderless consensus, CASPaxos, and Fast CASPaxos.

Leader-based vs leaderless consensus

Classic Paxos, Multi-Paxos, Raft, and CASPaxos are all examples of leader-based consensus. In leader-based consensus, before a proposer can commit a value, it must first obtain mutually exclusive (but revocable) rights to do so. Leader election grants this right. In Paxos, the prepare phase is where a proposer tries to acquire that right and the accept phase is where it tries to commit a value. If leadership is uncontended then each phase typically takes 1 round-trip (one message back and forth between the proposer and acceptors). Multi-Paxos, Raft, and CASPaxos let the same proposer amortize the cost of becoming a leader by allowing it to continue committing values as long as it is uncontended. The key point is that leadership is about mutual exclusion: only one proposer at a time has the right to commit values. For CASPaxos, the gist of how this works is that every time you commit a value you piggyback leader election for the next value in the same message: each accept request has the next prepare piggy-backed on.

Leadered and leaderless consensus both have a one-round-trip (1 RTT) fast case, but they optimize for different failure and contention patterns. Choosing which is the right approach depends on the scenario - we’ll talk about some cases later.

Leadered consensus lets only the leader commit in 1 RTT. If another proposer wants to commit, it must first become the leader (1 RTT) and then commit. That’s 2 RTT in total if you were to have a different proposer for each commit. If proposers are trying to commit values concurrently, they can end up contending, possibly indefinitely, trying to squeeze those 2 RTT in before the other proposer deposes them as leader. This is the dueling proposers problem.

Leaderless consensus algorithms allow any proposer to commit a value without first obtaining exclusive rights. This is the crux of the Fast Paxos optimization: acceptors are prepared ahead of time for a shared fast round in which proposers can send accept requests directly to acceptors instead of first performing a prepare phase. If enough acceptors receive the same value, it commits in a single round-trip. If concurrent proposers propose conflicting values, the protocol falls back to classic recovery (prepare then accept). So Fast Paxos is leaderless in fast rounds but leadered during recovery.

The cost of that leaderless fast path is a larger quorum. A later classic prepare quorum must still be able to tell which value, if any, could have been committed in the fast round. In a classic ballot there is only one proposer, so the ballot identifies a single candidate value. In a fast ballot many proposers can race, so different acceptors may report different values for the same ballot. Recovery therefore has to group responses by value at the highest fast ballot it sees and choose the unique maximum, or any tied maximum. That is why fast rounds need a supermajority: not because the fast proposer needs extra votes for its own sake, but because later recovery must be unable to reinterpret the round as having decided a different value. Leaderless consensus is attractive in many scenarios, but those larger quorums and the risk of conflicts mean it generally performs worse than leadered consensus under contention, which is why the ability to switch between the two modes is appealing.

When each mode fits best

Leadered

- High write rate. If one proposer is likely to drive a long sequence of updates, leadered mode amortizes prepare costs across many operations instead of paying them repeatedly.

- High conflict rate. When independent proposers frequently want different next values, a leader reduces repeated proposer-versus-proposer collisions and gives more stable progress than optimistic fast rounds. To take advantage of this, you need to route requests to a stable leader.

Leaderless

- Geo-distributed, low-conflict deployments. When network latency is high, leaderless 1 RTT commits are particularly attractive, but the penalty of contention is more pronounced.

- Infrequent writes. When writes are infrequent and proposers have a chance to learn the latest committed value without going through consensus, fast rounds allow 1 RTT commits at any proposer.

- Almost-everywhere agreement. When concurrent proposers are proposing the same value anyway, shared fast rounds let them proceed without dueling. The Rapid cluster membership algorithm uses Fast Paxos for exactly this property: Rapid’s cut detector produces proposals based on observer alerts and delays action until churn stabilizes into the same multi-process cut, resulting in very low conflict rates among proposers, allowing them to commit in 1 RTT most of the time without going through a leader.

- Register initialization. The initial fast ballot is implicitly prepared, so the first write can skip

prepareentirely and go straight toaccept. That makes 1 RTT initialization especially attractive when a system creates many lightweight registers which may only be written once.

When it’s not clear-cut

I’ve seen arguments saying that the smaller quorum requirements of leadered protocols confer advantages like:

- Reduced chance that a straggler server will slow down consensus

- Improved failure masking (more servers can fail before progress stops)

Those are not unreasonable ideas, but in a leadered protocol, the leader itself can be the straggler and slow down all requests (a gray failure). If the leader crashes you need to first detect that, which is inherently slow, and then elect a new leader. That handoff naturally creates a stutter where progress pauses while the system re-establishes mutual exclusion. The system becomes temporarily unavailable during this period. Leaderless algorithms don’t have these problems. Given that, these attributes are not clear-cut.

CASPaxos

CASPaxos implements a linearizable rewritable register. Instead of replicating an entire log of commands like Raft and MultiPaxos do, each operation has a proposer read the current register value, apply an update function locally, and replicate the resulting value to a quorum of acceptors. That makes it a good fit for small-ish blobs of strongly consistent state such as configuration, leases, and membership metadata.

Every update is protected by a ballot number. Before a proposer can overwrite the register, it has to learn the highest value which might still matter, so previously committed work cannot be clobbered blindly.

The protocol is still classic Paxos-shaped: first prepare a ballot, then accept a value at that ballot. The proposer can piggyback the next ballot’s prepare onto its successful accept, letting the same proposer stay on a 1 RTT steady-state path.

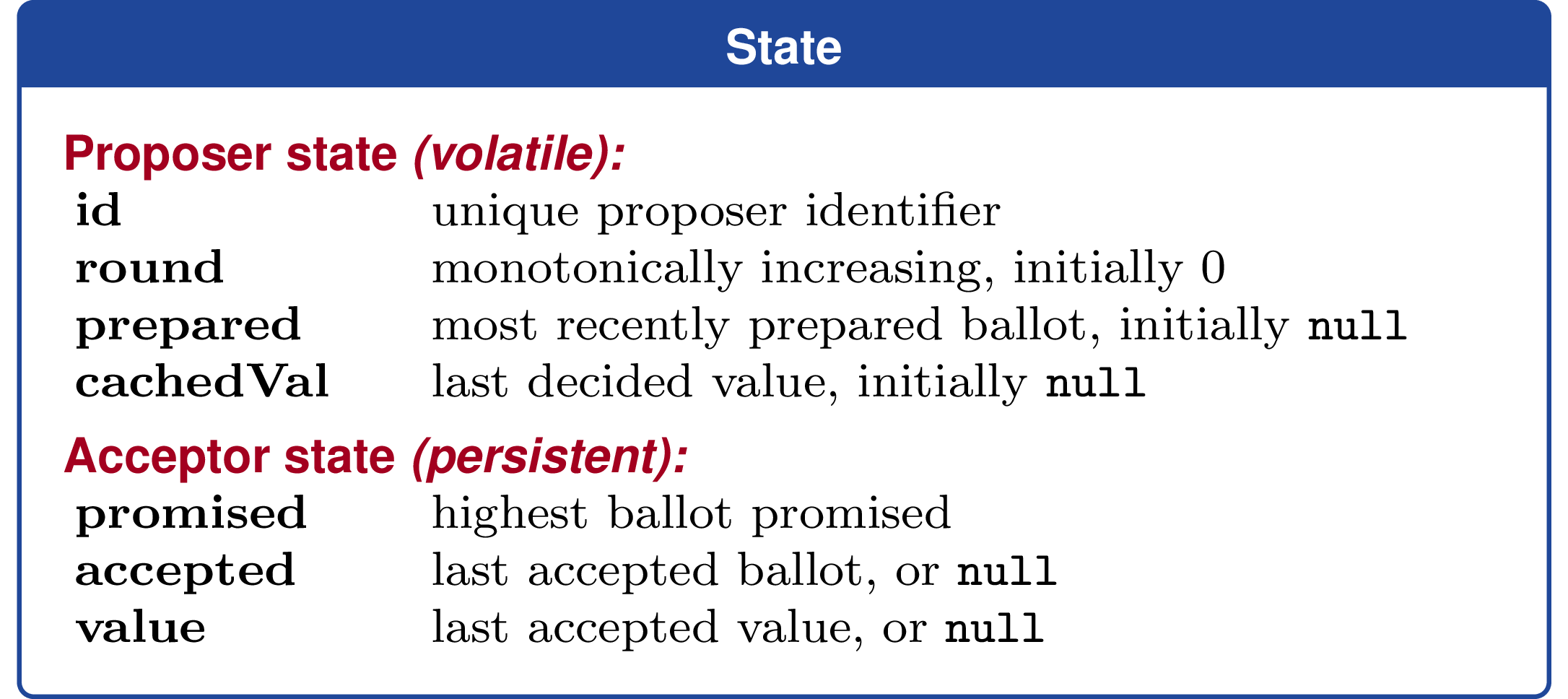

Role-local state

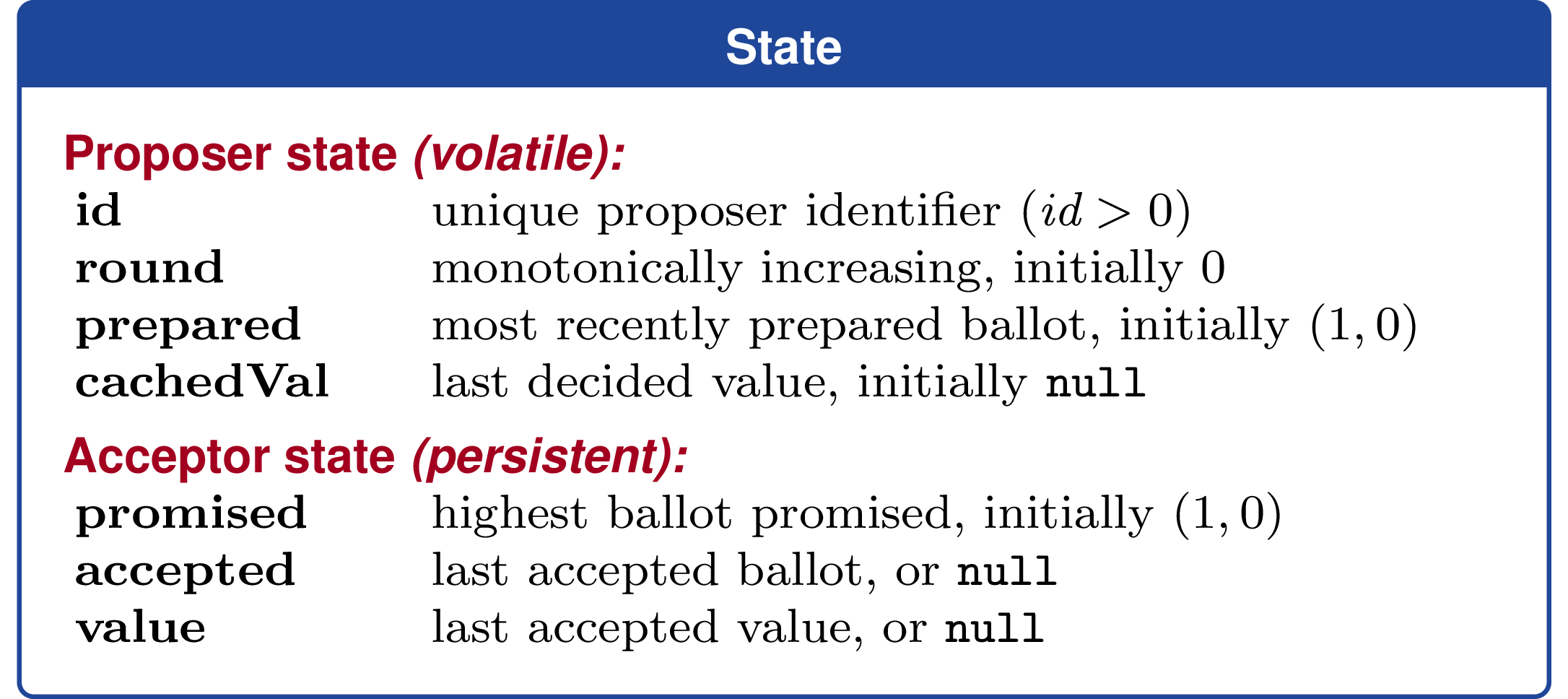

Proposer state (volatile)

id: unique proposer identifierround: monotonically increasing, initially 0prepared: most recently prepared ballot, initiallynullcachedVal: last decided value, initiallynull

Acceptor state (persistent)

promised: highest ballot promisedaccepted: last accepted ballot, ornullvalue: last accepted value, ornull

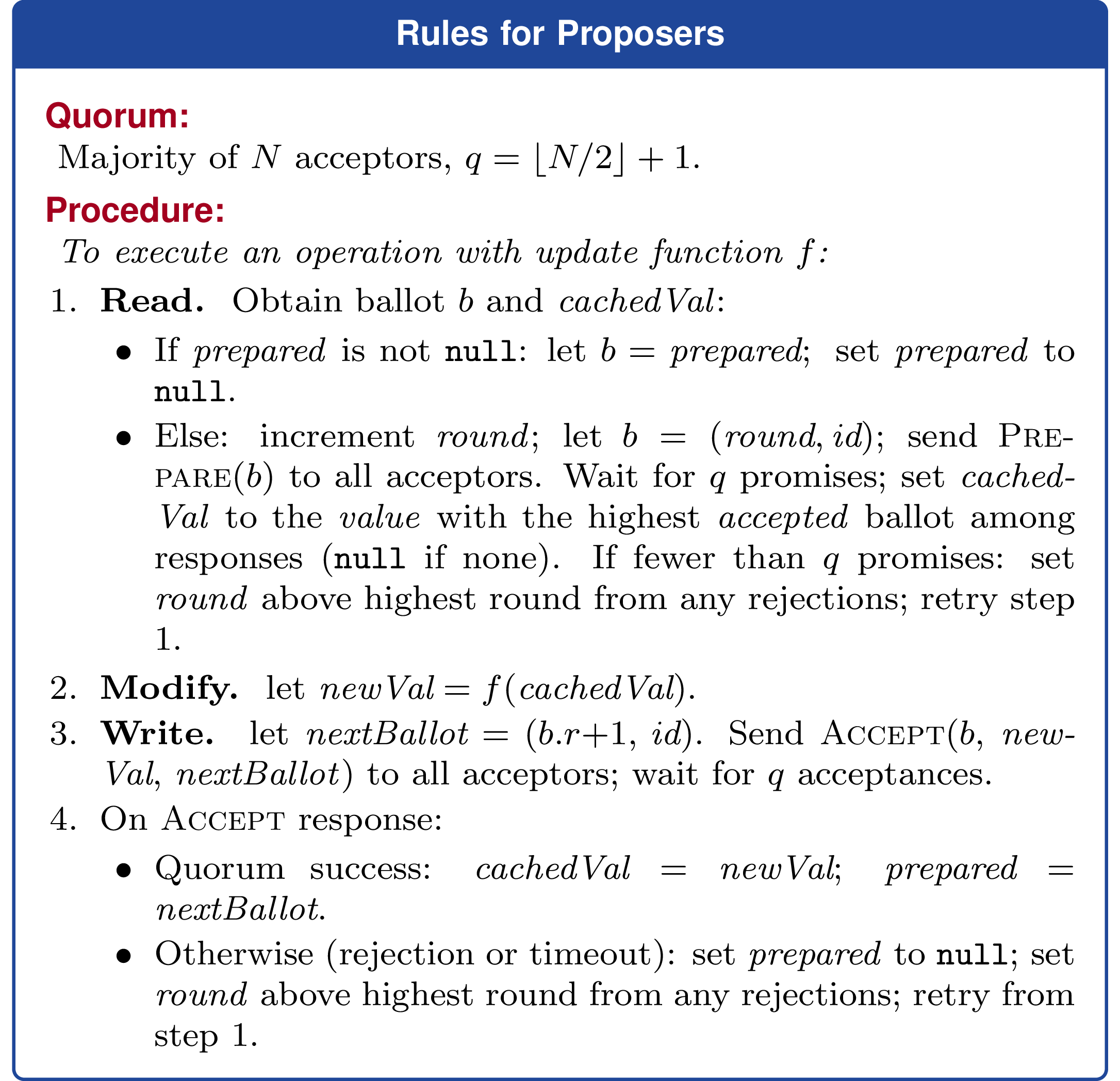

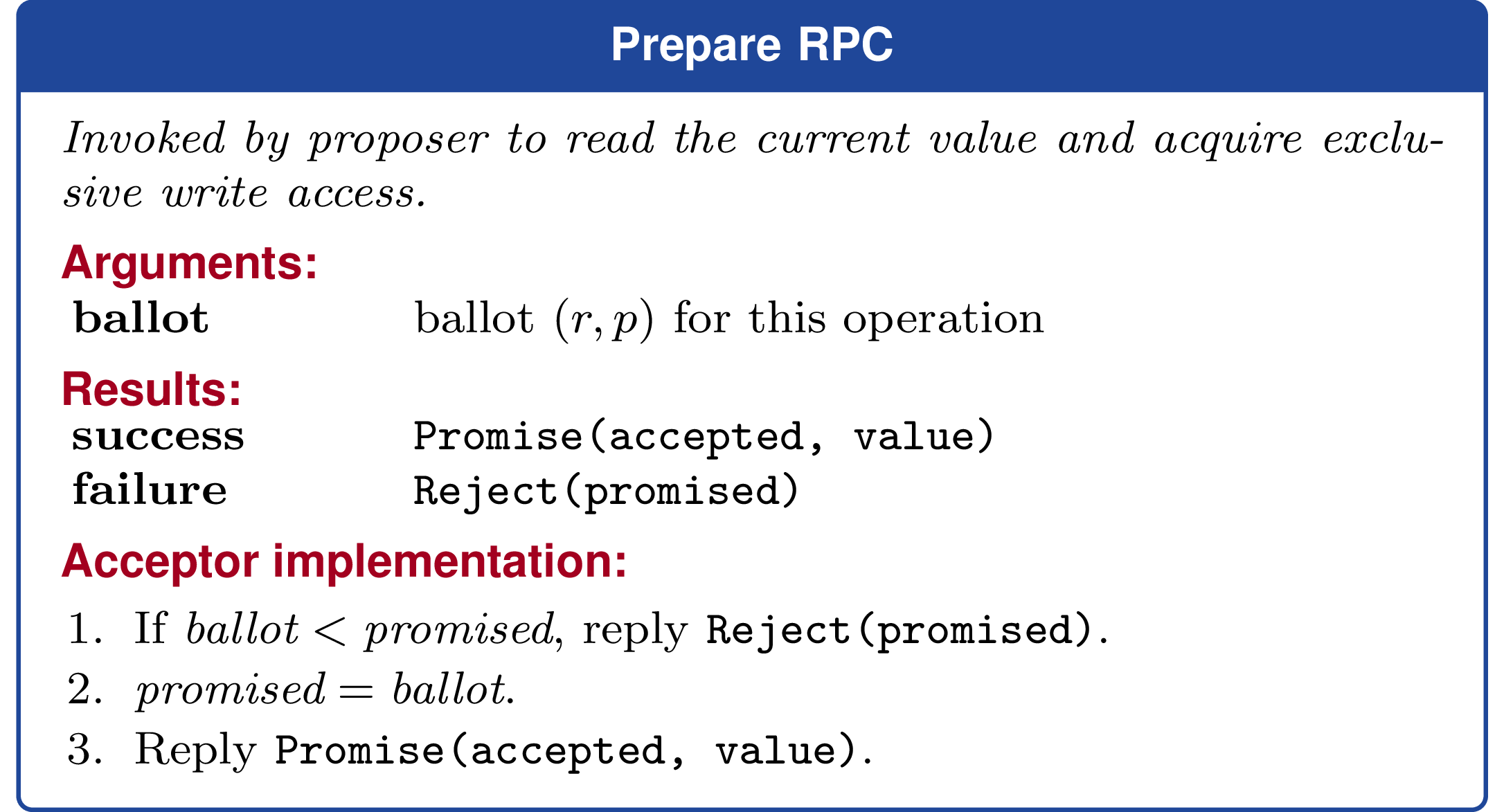

Phase 1: prepare

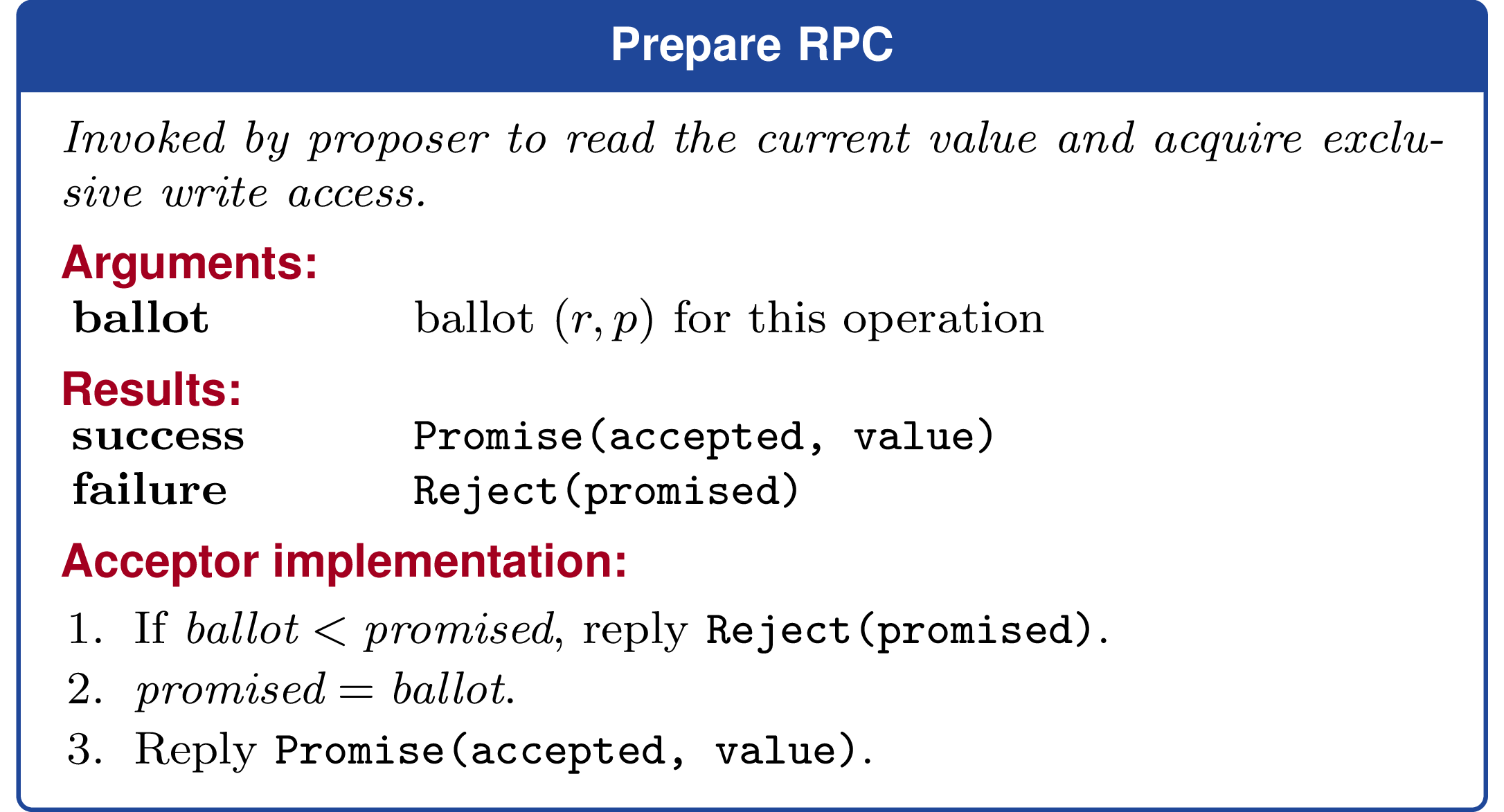

The proposer picks a fresh ballot b = (round, id) and sends Prepare(b) to acceptors. An acceptor compares b with its promised. If b is high enough, it raises promised to b and replies with Promise(accepted, value). Otherwise it replies with Reject(promised).

To succeed, the proposer needs a quorum of Promise responses. If some acceptor reports a higher ballot via Reject(promised), the proposer does not get to ignore it and move on. It must move round above the highest promised ballot it saw and retry.

Once prepare succeeds, the proposer recovers the latest value which might matter:

- if no acceptor in the quorum has accepted anything, then

cachedVal = ⊥ - otherwise, the proposer sets

cachedValto the value paired with the highestacceptedballot it observed

That recovered cachedVal is the safe input to the client’s update function.

Phase 2: accept

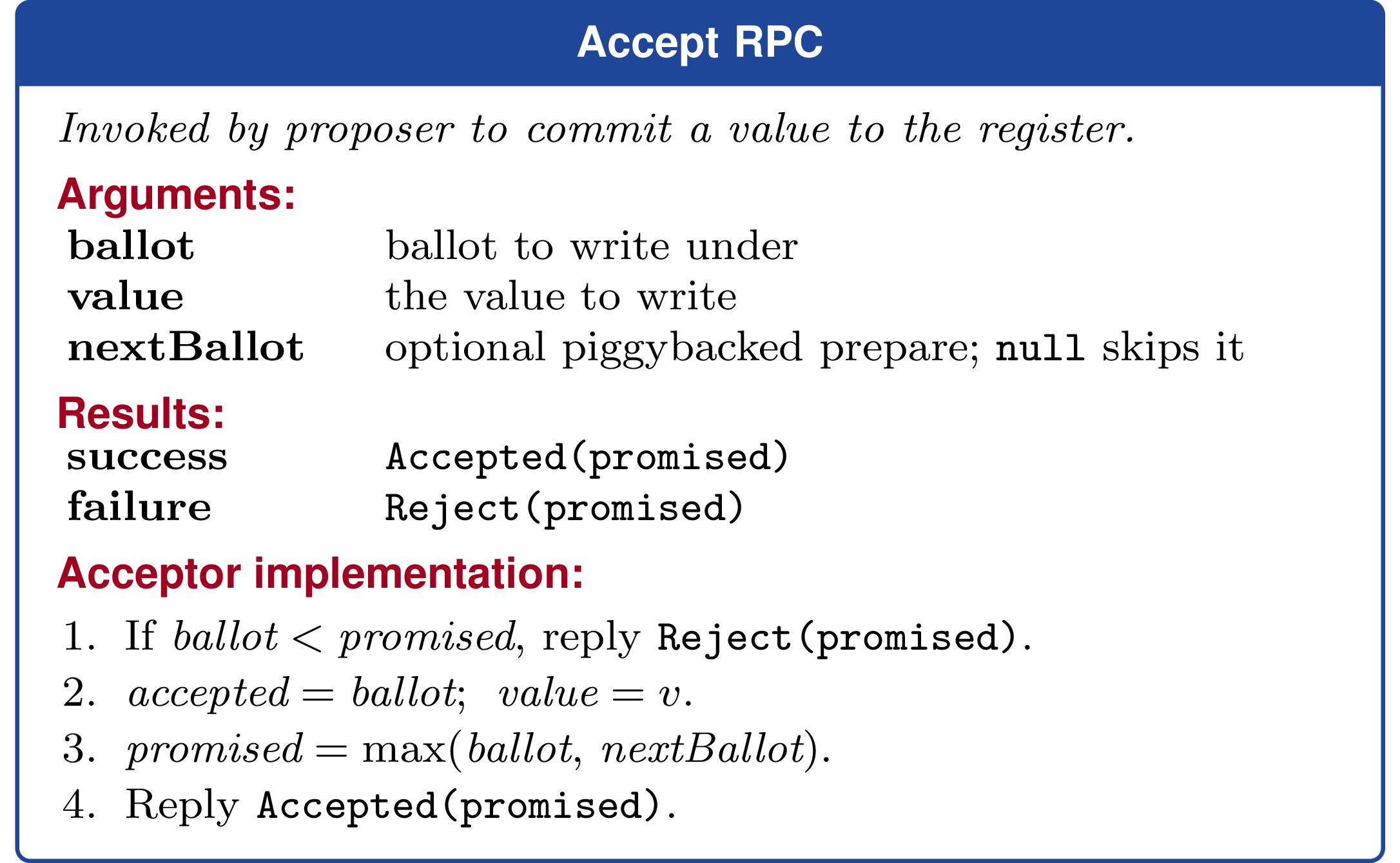

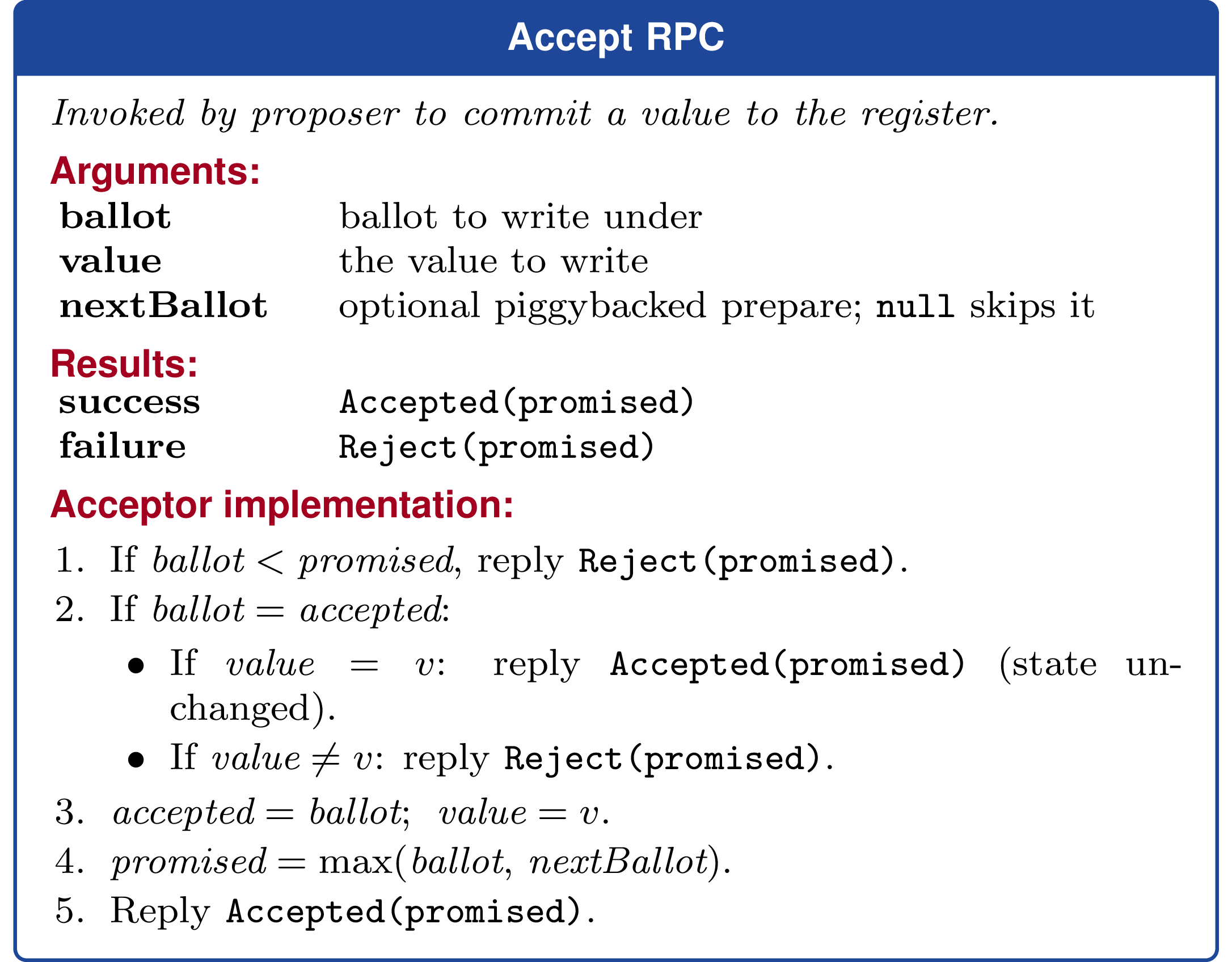

After prepare, the proposer computes newVal = update(cachedVal) and sends Accept(b, newVal, nextBallot) to acceptors.

An acceptor rejects if b is below its promised. Otherwise it:

- stores

accepted = b - stores

value = newVal - raises

promised = max(b, nextBallot)

The value is committed once a quorum accepts it.

That optional nextBallot field is the piggybacked-prepare optimization. Instead of doing:

preparethis roundacceptthis roundprepareagain next time

the proposer does:

preparethis roundacceptthis round and piggyback the next ballot

so the same proposer can cache prepared = nextBallot and skip the next standalone prepare.

The important bits are that prepare is what protects prior work by forcing a proposer to learn the highest value which might still matter, accept is the only step which actually commits a new value, and piggybacking the next ballot does not commit anything by itself; it only prepares the following round.

Fast CASPaxos

Everything above used classic proposer-owned ballots. Fast CASPaxos keeps the same proposer and acceptor roles, the same persistent acceptor state, and the same prepare/accept structure. The core changes relative to CASPaxos are:

- ballots with

proposerId = 0are shared fast ballots, so any proposer may use them - a piggybacked next ballot can therefore prepare either the next proposer-owned classic ballot or the next shared fast ballot

preparestill uses a classic majority, butacceptat a fast ballot needs a larger fast quorum- within a fast ballot, acceptors are first-write-wins: they accept the first value they see at that ballot and reject later different values

- if a later

preparesees that the highest accepted ballot was fast, it tallies values at that ballot and carries forward the unique maximum, or any tied maximum

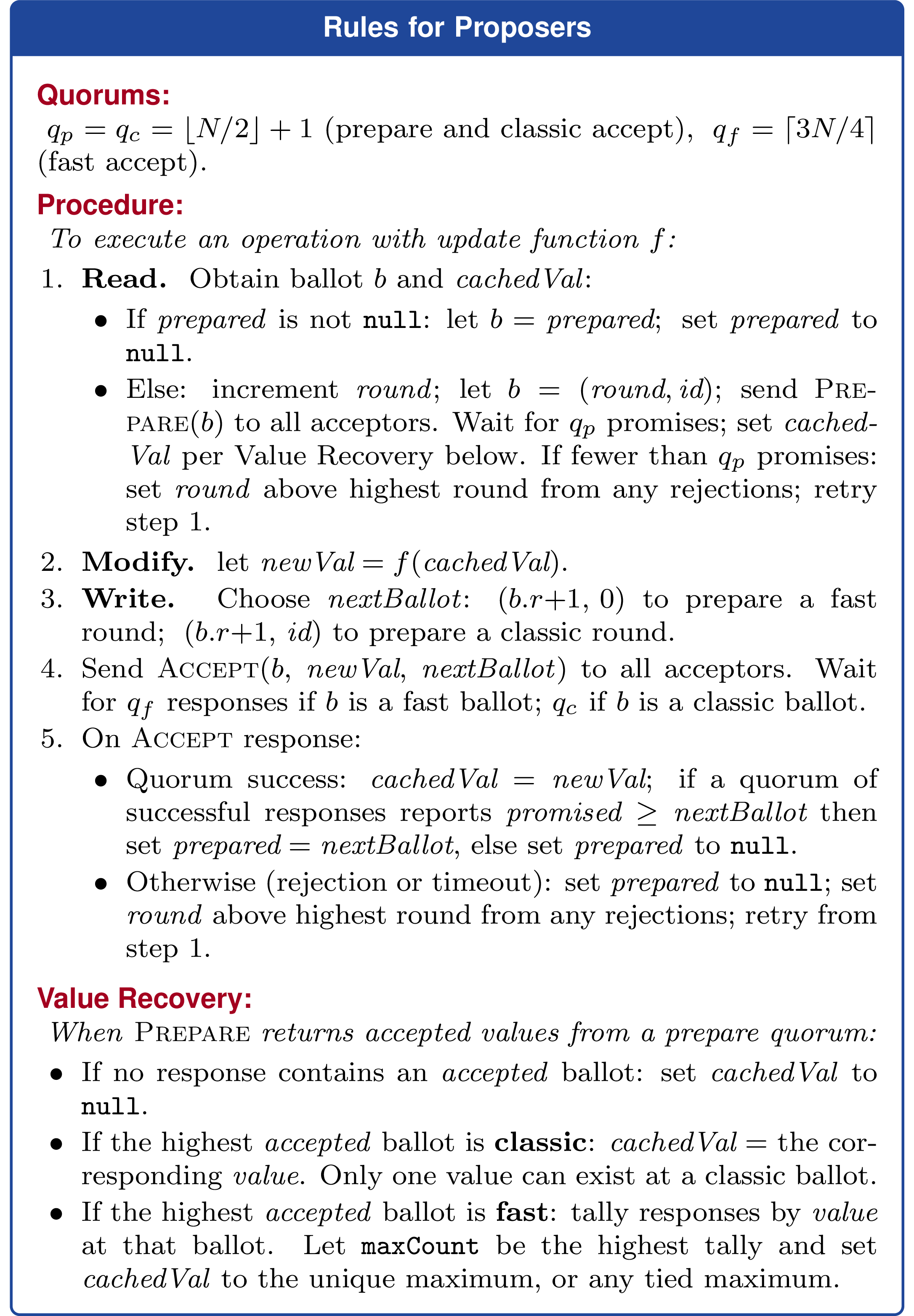

That is enough to turn CASPaxos into Fast CASPaxos without changing acceptor state. If two proposers send different values in the same fast ballot, neither reaches the fast quorum, so the protocol falls back to a classic recovery round which applies that tally rule. The cheatsheet and sketch below summarize the core protocol; the later subsections cover practical refinements and optimizations.

Here is the corresponding Fast CASPaxos protocol summary from the paper:

Protocol sketch

This is simplified to show the core protocol only. It omits duplicate-message handling, cached local views, and the best-effort learn path.

Notation:

- ballots are

(round, proposerId), whereproposerId = 0denotes the shared fast ballot for that round prepareQuorum = classicQuorum = floor(N / 2) + 1fastQuorum = ceil(3N / 4)acceptQuorumFor(ballot) = fastQuorumfor fast ballots andclassicQuorumfor classic ballots- every

prepareresponse returns(acceptedBallot, acceptedValue, maxBallot) prepare(b)succeeds at an acceptor exactly when the response reportsmaxBallot = b

Proposer

function propose(update): b := initial ballot to try

loop: if b is not already prepared: responses := collect Prepare(b) responses if responses with maxBallot == b do not reach prepareQuorum: b := next classic ballot above the highest maxBallot returned continue

currentValue := recoverValue(responses) else: currentValue := previously learned value for b

newValue := update(currentValue) next := optional next ballot to piggyback

responses := collect Accept(b, newValue, next) responses if accepts in responses reach acceptQuorumFor(b): return newValue

b := next classic ballot above the highest conflicting ballot returned

function recoverValue(responses): highest := maximum acceptedBallot reported in the responses

if highest == ⊥: return ⊥

if highest is a classic ballot: return the value paired with highest

// highest is a fast ballot, so several values may appear at that ballot votes := count values among responses whose acceptedBallot == highest return any value tied for the largest countNotes:

preparealways needs only a classic majority. The larger quorum is only for fastaccept.- A proposer only reuses

nextif enough successfulacceptresponses confirm that the promise really landed. - In practice, a proposer only skips

preparewhen it already has a prepared ballot and enough local knowledge to computenewValue.

Acceptor

state: promisedBallot := (1, 0) // initial fast ballot is implicitly prepared acceptedBallot := ⊥ acceptedValue := ⊥

function onPrepare(b): if b >= promisedBallot: promisedBallot := b reply prepareResponse(acceptedBallot, acceptedValue, promisedBallot)

function onAccept(b, v, next): maxBallot := max(promisedBallot, acceptedBallot) if b < maxBallot: reply reject(maxBallot) return

// Optimization: idempotency from possibly multiple proposers if b == acceptedBallot and v == acceptedValue: reply accept(promisedBallot) return

if b == acceptedBallot and v != acceptedValue: reply reject(maxBallot) return

acceptedBallot := b acceptedValue := v

if next != none: promisedBallot := max(promisedBallot, next)

reply accept(promisedBallot)Concurrently committing identical values

First-write-wins only rules out different values at the same fast ballot. To avoid treating identical concurrent proposals as conflicts, acceptors still acknowledge an accept request which exactly matches their current accepted ballot and value. That keeps almost-everywhere-agreement workloads efficient without changing state.

In classic rounds, the same behavior also makes accept retries idempotent.

Register initialization

To allow proposers to initialize a register in 1 RTT, they start with the accept phase for the initial fast round, (1, 0). This way, either they can have their initial value committed if there is no contention, or they will learn of the conflicting ballot and can retry at the prepare phase. Acceptors are initialized with a promised ballot of (1, 0).

Reusing a piggybacked ballot safely

Piggybacking a next ballot is only a latency optimization. A proposer may skip a standalone prepare on its next operation only if a quorum of successful accept responses confirms that the requested next ballot was actually promised. If only some acceptors echoed it, the proposer discards that candidate ballot and falls back to prepare.

Learning decided values

A proposer may send Accept(b, v, next) only if it already knows the register value for b. It can learn that value by running prepare, by committing and reusing the piggybacked next ballot, or via a best-effort learn notification from another proposer.

Learn traffic is only an optimization. If that notification is stale or missing, the proposer falls back to prepare.

Do we even need classic rounds?

Fast CASPaxos could be generalized further to only use fast rounds, and never fall back to classic rounds. Distributed consensus revised contains the necessary relaxations/generalizations. On the other hand, having distinct leadered vs leaderless rounds confers higher fault tolerance and it’s arguably less of a leap for people familiar with Classic Paxos already.

Conclusion

Fast CASPaxos is a small extension to CASPaxos that implements a leaderless linearizable register. It’s conceptually a blend of Fast Paxos and CASPaxos. It’s likely most useful for consistent group membership (eg, Rapid) and metadata replication (eg, Delos and other Consensus for Metadata systems (which is a lot, btw)). I’m happy to have scratched this itch that has been bugging me for a long time.

I uploaded a draft PDF of a paper on Fast CASPaxos. The accompanying repository also includes a TLA+ model checked with TLC, a deterministic simulation suite with Porcupine linearizability checking, and some toy benchmark workloads for various scenarios topologies. When reading the code, note that while I spent time on the core (proposer, acceptor, types, etc), a lot of what surrounds it is generated by coding agents, especially the benchmark harness.